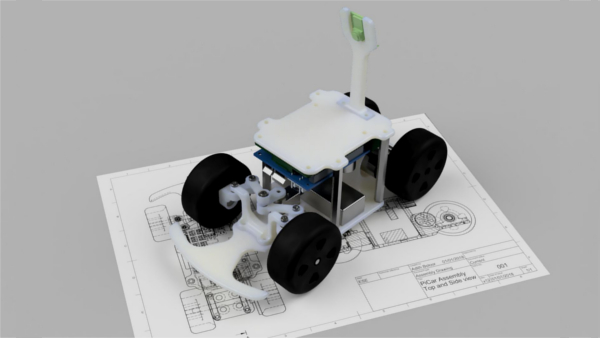

Our research group at Washington University in St. Louis had been using the CARLA autonomous driving simulator for a while now for studying how robust different self-driving algorithms are. We looked at attacking some of the commonly used self-driving models on Carla like the Imitation Learning and Reinforcement Learning models. Both these models used camera as their input to their respective models, to output throttle and steering values. We modified CARLA so that we could 'paint' lines in the road which mimic skid marks (or just painted lines), and found that we could successfully cause these models to break by doing so in calculated ways. Paper: Attacking Vision-based Perception in End-to-End Autonomous Driving Models (arxiv)

Project Details

- Made for: Design Automation Conference 2019

- Location: DAC 2019, Las Vegas, Nevada

- Date: June, 2019

- Karthik Garimella

- Jinghan Yang

- Dr Xuan Zhang

- Dr Ayan Chakrabarti

- Dr Yevgeniy Vorobeychik

- Dr Christopher Gill

- Teammates:

- Advisers:

Project Story

We attacked the Imititation Learning (IL) and Reinforcement Learning (RL) driving models on the CARLA driving simulator. We used Bayesian Optimization to find suitable adversaries.

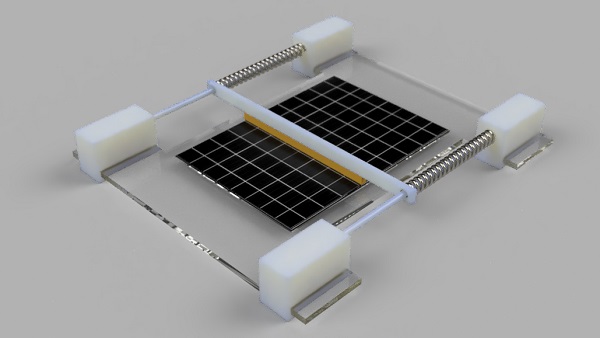

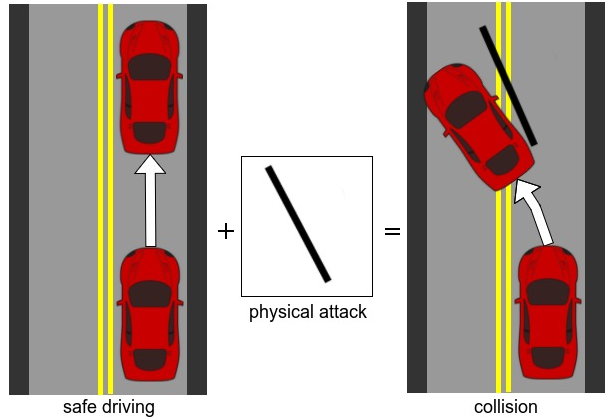

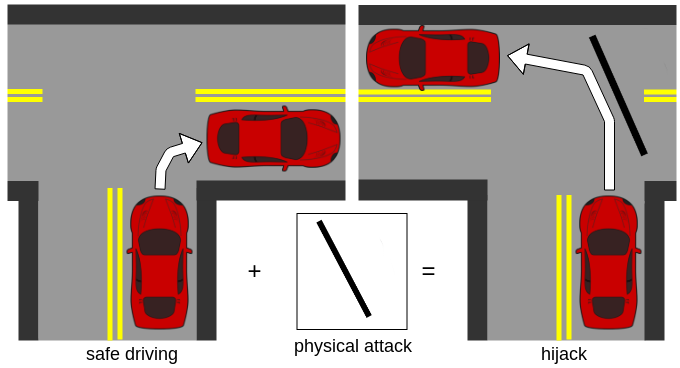

The above figure shows what the objective of our attack is. In this scenario, the baseline scenario is the IL or RL model taking a left or right turn at a corner or driving in a straight line while following its lane. The attack is to the force the car to veer off its lane and crash.

The above gif shows an example of a successful attack where we painted the black lines which cause the vehicle to veer of the road and crash.

The above figure shows a similar concept, except that the baseline case is now of the driving model tasked to make a right turn at an intersection. The attack is to force the vehicle to make a left turn at the intersection.

The above gif demonstrates that we can place adversaries in such a way that the IL model gets confused enough to take a left turn instead of a right turn at the intersection. Hence, we showed that it is possible to attack vision based self-driving vehicle models using physical adversaries.